High-quality Relightable Free-Viewpoint Video using a Skinned Template Model

The Relightable Free-Viewpoint Video Project

High-quality Relightable Free-Viewpoint Video using a Skinned Template Model

Abstract:

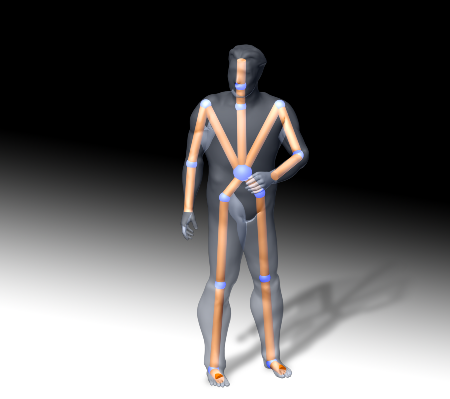

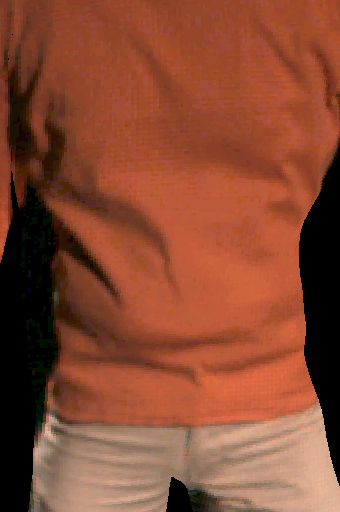

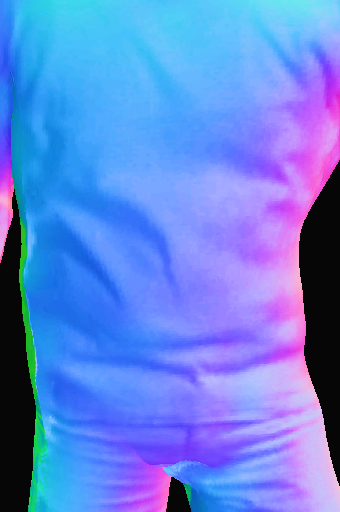

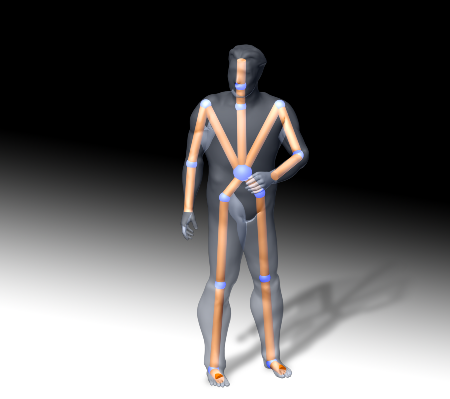

By means of passive optical motion capture real people can be authentically animated and photo-realistically textured. To import real-world characters into virtual environments, however, also surface reflectance properties must be known. We describe a video-based modeling approach that captures human shape and motion as well as reflectance characteristics from a handful of synchronized video recordings. The presented method is able to recover spatially varying surface reflectance properties of clothes from multi-view video footage. The resulting model description enables us to realistically reproduce the appearance of animated virtual actors under different lighting conditions, as well as to interchange surface attributes among different people, e.g. for virtual dressing. Our contribution can be used to create 3D renditions of real-world people under arbitrary novel lighting conitions on standard graphics hardware.

Movie in .avi format

Related Publications

Relightable 3D Video Of Human Actors

We have augmented our original model-based pipeline to capture and render free-viewpoint videos such that we do not only estimate dynamic texture information but also dynamic surface reflectance properties. This way, free-viewpoint videos can also be rendered under novel virtual lighting conditions.

Related Publications

Contact

| Christian Theobalt | Naveed Ahmed | |

| theobalt@mpi-sb.mpg.de | nahmed@mpi-sb.mpg.de | |

| Max-Planck-Institut für Informatik Stuhlsatzenhausweg 85 66123 Saarbrücken Germany | ||